Getting Started

Temporary documentation for GMU ORC's DGX A100 Server

Hardware Specs

You can learn more about NVIDIA DGX A100 systems here:

https://www.nvidia.com/en-us/data-center/dgx-a100/

Getting Access

The DGX server is a part of the new Hopper cluster. Users would need to log into Hopper to submit jobs to the DGX.

Log into the Hopper cluster with:

You can log into the DGX only if you only have an active job on the DGX:

you have submitted a SLURM batch job (using

sbatch) and it is actively running on the DGX, oryou have an active SLURM interactive session (using

salloc) on the DGX

Depending on why you want access to the DGX, you can take these two approaches.

For Quick Compiling and Testing

Because the DGX has a different OS (Ubuntu 20.04 LTS) and CPU architecture (AMD EPYC Zen2), you would likely need to recompile your code on the DGX itself and run quick tests before submitting any production runs. For that purpose, you can request a small interactive session via SLURM:

If you don't need a GPU, you can request 1 core :

If you need a GPU, you can request a GPU along with CPU cores

This will log you into the DGX as soon as the requested resource is available:

For Production Calculations

For production runs, you can submit your job batch or interactive job through SLURM and ssh into the DGX if necessary.

Otherwise, your connection attempt will be declined with a message like this:

Access denied by pam_slurm_adopt: you have no active jobs on this node Connection closed by server on port ##

Running Jobs

The DGX runs Ubuntu 20.04 LTS. You can run calculations on it by submitting jobs via SLURM in batch or interactive mode from Hopper.

Both containerized and native applications are supported. You can run

containerized applications using Singularity containers you build or ones we provide

native applications you have compiled or those we provision using Lmod modules

These two approaches are described below.

Running Containerized Applications

We provide a growing list of Singularity containers in a shared location. You are also welcome to pull and run your own Singularity containers.

Using Shared Containers

Containers and examples available for all users can be found on at /containers/dgx/Containers and /containers/dgx/Examples. The environmental variable $SINGULARITY_BASE points to /containers/dgx/Containers

Currently available containers can be viewed with:

We encourage using these shared containers because they are optimized by NVIDIA to run well on the DGX. Sharing containers also saves storage space. Please let us know if you want us to add particular containers.

Building your Own Containers

Modern containers come from many registries (Dockerhub, NGC, SingularityHub, Biocontainers, ... etc ) and in different formats (Docker, Singularity, OCI) and runtimes (Docker, Singularity, CharlieCloud, ...).

Please keep in mind that you can not build or run Docker containers directly on Hopper or the DGX. You would need to pull and convert Docker containers to Singularity format and run the Singularity containers.

We use Docker containers pulled from NVIDIA GPU Cloud (NGC) catalog in the examples below, but the same steps apply to containers from other sources. The NVIDIA GPU Cloud (NGC) provides simple access to GPU-optimized software for deep learning, data science and high-performance computing (HPC). An NGC account grants you access to these tools as well as the ability to set up a private registry to manage your customized software. However, it is not absolutely necessary that you have an NGC account. Please see the link below for more:

NGC commands:

This example below demonstrates how to search and pull down a GROMACS image using the NGC CLI:

Pulling Docker containers and building Singularity containers:

Once you select a Docker container to use, you need to pull it down and convert it to a Singularity image format with the following command. You would need to load singularity module first.

Here is an example for preparing a GROMACS Singularity container:

Please note that we have adapted the following convention on naming Singularity image files.

we use SIF instead of SIMG for the file extension

we name containers as

<container_name>_<container_version/tag>.sif

Also note that you can pull the containers from NGC, DockerHub or any other source, but we encourage using ones from the NGC registry if one is available because they are optimized for NVIDIA GPUs.

Running Native Applications

If you want to run native GPU-capable applications, you can run them much like you have on Argo.

load up the module for the GPU-capable application/version

run the application

We currently have a limited set of native applications that have been tested on the DGX. That will increase over time.

The DGX is very different from Argo and Hopper in terms of OS, CPU and GPU architecture as well as the software stack running on it. Therefore, you would generally need to recompile your code on the DGX itself using the software stack built for the DGX. Please email orchelp@gmu.edu if you need help.

To access modules built for the DGX, first load into the DGX by creating a short interactive session:

You should see a hosts/dgx module loaded and other modules that are available to you:

Scheduling SLURM Jobs

Once you have a native or containerized application, you can run it through SLURM either interactively or using batch submission scripts. Both approaches are discussed below. To run jobs on the DGX, you would need

You can run a native or containerized application through SLURM either interactively or using batch submission scripts. Both approaches are discussed below. To run jobs on the DGX, you would need

to have a SLURM account on Hopper AND

be eligible to use the 'gpu' Quality-of-Service (QoS)

The DGX is part of the ‘gpuq’ partition.

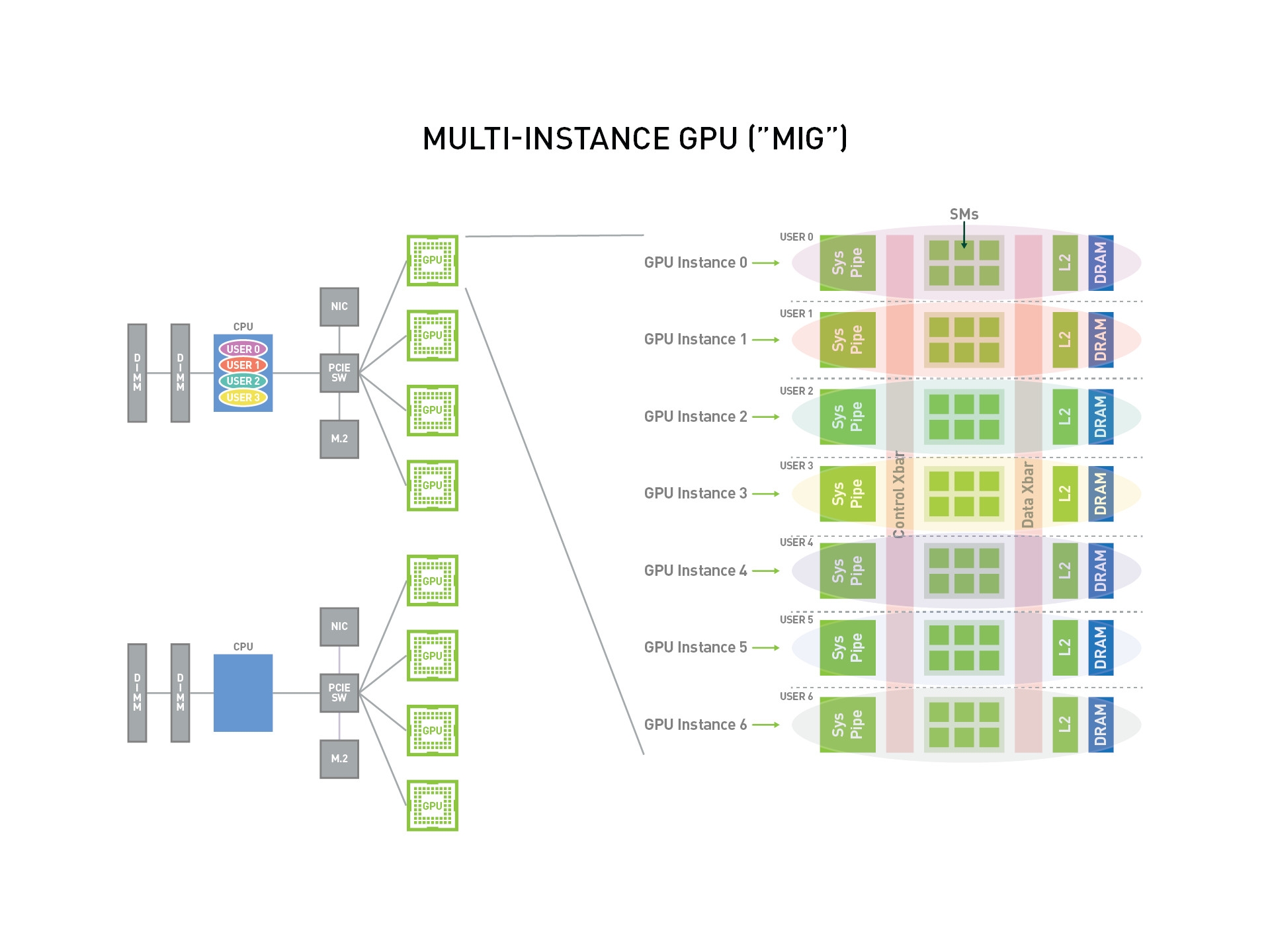

The GPU list shows 6x A100.40gb GPUs as well as 9x 1g.5gb, 1x 2g.10gb and 1x 3g.20gb resources. The latter three types of resources are a product of a partitioning scheme called Multi-Instance GPU (MIG).

GPU partitioning

The DGX A100 has 8 NVIDIA Tesla A100 GPUs which can be further partitioned into smaller slices to optimize access and utilization. For example, each GPU can be sliced into as many as 7 instances when enabled to operate in MIG (Multi-Instance GPU) mode.

GPU Instance Profiles on A100 Profile

Our DGX is currently partitioned such that six of the 8 A100 GPUs (GPU ID 0-5) are not partitioned while the last two (GPU ID 6-7) are partitioned into slices of different sizes.

The way the GPUs are partitioned will likely change over time to optimize utilization.

The best way to take advantage of MIG operation is to analyze the demands of your job and determine which GPU size is available and suitable for it. For example, if your simulation uses very small memory, you would be better off using a 1g.5gb slice and leaving the bigger partitions to jobs that need more GPU memory. Another consideration for machine learning jobs is the difference in demands of training and inference tasks. Training tasks are more compute and memory intensive, this they are a better for for a full GPU or large partition while inference tasks would run sufficiently on smaller slices.

Interactive Mode

You can request an interactive access the DGX A100 server through SLLURM as follows:

Once your reservation is available, you will be logged into the DGX automatically:

To run the container while connected:

As an example, the following command runs a Python script using Tensorflow container

You can run on any one or more GPUs. The GPUs are indexed 0-7. Since this is a shared resource, we encourage you to monitor the GPUs usage and selectively submit to idle GPU(s) when running jobs interactively. For example, the output of nvidia-smi command suggests that there GPUs indexed 0,1,2 are being actively used, and you should run your jobs on one of the other GPUs.

To select particular GPU(s), you can use the SINGULARITYENV_CUDA_VISIBLE_DEVICES environmental variable. For example, you can select the 1st and 3rd GPU by setting

SLURM specifies the GPU indices assigned to your job to the SLURM_JOB_GPUS environmental variable. So you can set

For example, the following commands will run on any number of GPU assigned to you:

While you are on the server, you can use these tools to monitor the GPU usage:

nvidia-smi- has lots of option to set and monitor GPUsnvitop -m- great for live monitoring GPU usage, complete with color codingnvtop- ncurses-based gpu monitoring similar to 'htop'

Please remember to log out of the DGX A100 server when you finish running your interactive job.

Batch Mode

Below is a sample SLURM batch submission file you can use as an example to submit your jobs. Save the information into a file (say run.slurm), and submit it by entering sbatch run.slurm. Please update <N_CPU_CORES>, <MEM_PER_CORE> and <N_GPUs> to reflect the number of CPU cores and GPUs you need. Please note that the DGX has 128 CPU cores, 8 GPUs and 1TB of system memory.

We encourage the use of environmental variables to make the job submission file cleaner and easily reusable.

The syntax for running different containers varies depending on the application. Please check the NGC page for more instructions on running these containers using Singularity.

Storage Locations

Currently, these locations have been designated for storing shared and user-specific containers.

Containers

Shared:

/containers/dgx/ContainersUser-specific:

/containers/dgx/UserContainers/$USER

Examples

Containerized applications:

/containers/dgx/ExamplesNative applications:

/groups/ORC-VAST/app-tests

Sample Runs

We provide some sample calculations to facilitate setting up and running calculations:

examples demonstrating how you run different applications to

/containers/dgx/Examplesexamples on running native and containerized applications is available here:

/groups/ORC-VAST/app-testsThe examples at https://gitlab.com/NVHPC/ngc-examples are helpful. For many applications, there are no instructions on running the containers using Singularity, but you should be able to build one from the Docker image and run it.

Last updated